Yilin Wu 吴怡琳

PhD student @ RI, SCS, CMU

yilinwu [at] andrew [dot] cmu [dot] edu

Email is the best way to contact me!

I am a third-year Ph.D student at Intent Lab in CMU Robotics Institute, advised by Prof. Andrea Bajcsy. Previously, I am fortunate to work with Prof. David Held on generalizable methods for long-horizon contact-rich manipulation.

Before coming to CMU, I was a master student with a focus on assistive feeding and bimanual manipulation in Computer Science Department at Stanford University, supervised by Prof. Dorsa Sadigh.

In the past, I am also fortunate to work with Prof. Yi Wu from Tsinghua University at Shanghai Qi Zhi Institute on reinforcement learning and self-imitation. In my undergrad, I also worked closely with Prof. Pieter Abbeel and Prof. Lerrel Pinto on reinforcment learning for deformable object manpulation.

My current research focuses on open-world robot learning for manipulation and human-robot interaction. My research centers around overcoming the fundamental embodiment gap that limits the application of foundation models to robotics. By grounding the high-level semantic reasoning in the continuous dynamic environment of the physical world, I want to build robots that can learn, reason and act capably in human-centered environment. My current work tries to enhance the robots’ generalization and robustness with runtime alignment and continual learning. I am also broadly interested in developing various deep learning methods like reinforcement learning and imitation learning to enhance robot’s capability for more complex manipulation tasks.

News

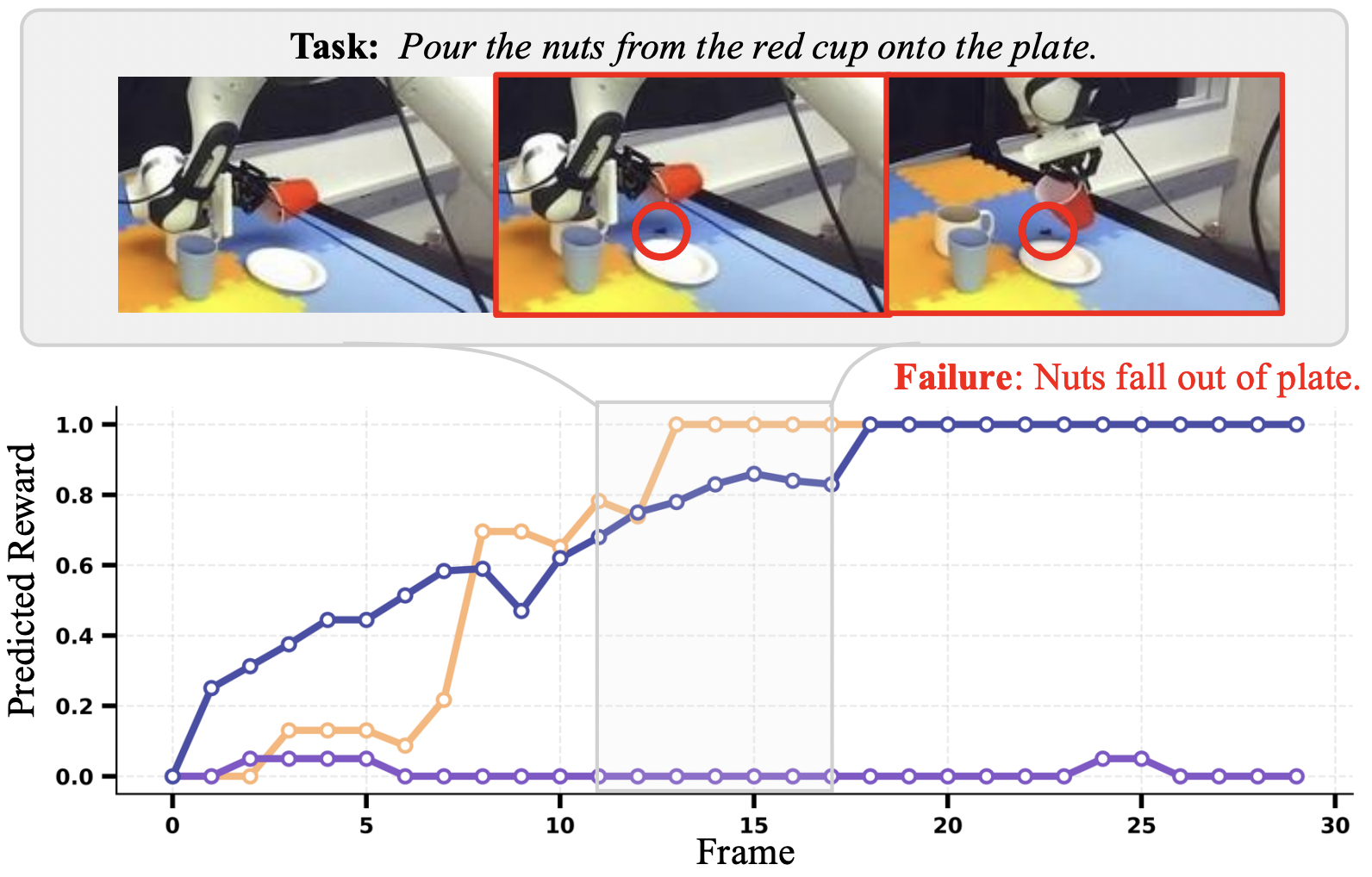

| Jan 2026 | Excited to share my work from my internship at Nvidia (Do What You Say: Steering Vision-Language-Action Models via Runtime Reasoning-Action Alignment Verification) is accepted to ICRA 2026! |

|---|---|

| Nov 2025 | Excited to give a talk about my research on Language-Guided Runtime Steering with Robot Foundation Models at Duke DexLab! |

| Oct 2025 | Excited to give a talk about my research on Language-Guided Runtime Steering with Robot Foundation Models at UT Austin Robin Lab! |

| Jul 2025 | Excited to give a talk about my FOREWARN paper at Robotics Team in Meta FAIR ! |

| Jun 2025 | One paper From Foresight to Forethought: VLM-in-the-loop Policy Steering via Latent Alignment got accepted to RSS 2025. |

| May 2025 | Excited to start my summer internship in Nvidia Seattle Robotics Lab! |

| Jun 2024 | One paper Learning Generalizable Tool-use Skills through Trajectory Generation got accepted at IROS 2024. |

| May 2024 | Open X-Embodiment wins the Best Paper Award at ICRA 2024. |

| May 2024 | Two papers DROID and HACMan++ are accepted by RSS 2024. |

| Sep 2023 | Our work on bimanual manipulation inspired by human coordination is accepted to CoRL 2023 as Oral presentation. |

Publications

- ICRA

Do What You Say: Steering Vision-Language-Action Models via Runtime Reasoning-Action Alignment VerificationIn 2026 IEEE International Conference on Robotics and Automation (ICRA), 2026Outstanding Paper Award at CVPR 2026 2nd Multimodal Reasoning For Agentic Intelligence Workshop

Do What You Say: Steering Vision-Language-Action Models via Runtime Reasoning-Action Alignment VerificationIn 2026 IEEE International Conference on Robotics and Automation (ICRA), 2026Outstanding Paper Award at CVPR 2026 2nd Multimodal Reasoning For Agentic Intelligence Workshop